Act now and download your Microsoft 70-775 test today! Do not waste time for the worthless Microsoft 70-775 tutorials. Download Improved Microsoft Perform Data Engineering on Microsoft Azure HDInsight (beta) exam with real questions and answers and begin to learn Microsoft 70-775 with a classic professional.

NEW QUESTION 1

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDInsight.

You plan to import 300 TB of data.

You plan to use one job that has many concurrent tasks to import the data in memory.

You need to maximize the amount of concurrent tanks for the job.

What should you do?

- A. Use a shuffle join in an Apache Hive query that stores the data in a JSON format.

- B. Use a broadcast join in an Apache Hive query that stores the data in an ORC format.

- C. Increase the number of spark.executor.cores in an Apache Spark job that stores the data in a text format.

- D. Increase the number of spark.executor.instances in an Apache Spark job that stores the data in a text format.

- E. Decrease the level of parallelism in an Apache Spark job that Mores the data in a text format.

- F. Use an action in an Apache Oozie workflow that stores the data in a text format.

- G. Use an Azure Data Factory linked service that stores the data in Azure Data lake.

- H. Use an Azure Data Factory linked service that stores the data In an Azure DocumentDB database.

Answer: C

Explanation:

References: https://blog.cloudera.com/blog/2015/03/how-to-tune-your-apache-spark-jobspart-2/

NEW QUESTION 2

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are building a security tracking solution in Apache Kafka to parse Security logs. The Security logs record an entry each time a user attempts to access an application. Each log entry contains the IP address used to make the attempt and the country from which the attempt originated.

You need to receive notifications when an IP address from outside of the United States is used to access the application.

Solution: Create two new brokers. Create a file import process to send messages. Run the producer.

Does this meet the goal?

- A. Yes

- B. No

Answer: B

NEW QUESTION 3

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDlnsight.

You need to integrate Apache Sqoop data and to chain complex jobs. The data and jobs will implement MapReduce. What should you do?

- A. Use a shuffle join in an Apache Hive query that stores the data in a JSON format.

- B. Use a broadcast join in an Apache Hive query that stores the data in an ORC format.

- C. Increase the number of spark.executor.cores in an Apache Spark job that stores the data in a text format.

- D. Increase the number of spark.executor.instances in an Apache Spark job that stores the data in a text format.

- E. Decrease the level of parallelism in an Apache Spark job that Mores the data in a text format.

- F. Use an action in an Apache Oozie workflow that stores the data in a text format.

- G. Use an Azure Data Factory linked service that stores the data in Azure Data lake.

- H. Use an Azure Data Factory linked service that stores the data In an Azure DocumentDB database.

Answer: F

NEW QUESTION 4

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are implementing a batch processing solution by using Azure HDlnsight. You have a table that contains sales data.

You plan to implement a query that will return the number of orders by zip code.

You need to minimize the execution time of the queries and to maximize the compression level of the resulting data.

What should you do?

- A. Use a shuffle join in an Apache Hive query that stores the data in a JSON format.

- B. Use a broadcast join in an Apache Hive query that stores the data in an ORC format.

- C. Increase the number of spark.executor.cores in an Apache Spark job that stores the data in a text format.

- D. Increase the number of spark.executor.instances in an Apache Spark job that stores the data in a text format.

- E. Decrease the level of parallelism in an Apache Spark job that Mores the data in a text format.

- F. Use an action in an Apache Oozie workflow that stores the data in a text format.

- G. Use an Azure Data Factory linked service that stores the data in Azure Data lake.

- H. Use an Azure Data Factory linked service that stores the data In an Azure DocumentDBdatabase.

Answer: B

NEW QUESTION 5

DRAG DROP

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

Start of Repeated Scenario:

You are planning a big data infrastructure by using an Apache Spark Cluster in Azure HDInsight. The cluster has 24 processor cores and 512 GB of memory.

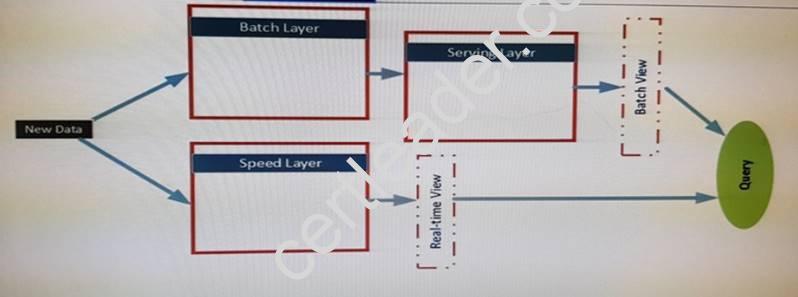

The Architecture of the infrastructure is shown in the exhibit:

The architecture will be used by the following users:

* Support analysts who run applications that will use REST to submit Spark jobs.

* Business analysts who use JDBC and ODBC client applications from a real-time view. The business analysts run monitoring quires to access aggregate result for 15 minutes. The result will be referenced by subsequent quires.

* Data analysts who publish notebooks drawn from batch layer, serving layer and speed layer queries. All of the notebooks must support native interpreters for data sources that

are bath processed. The serving layer queries are written in Apache Hive and must support multiple sessions. Unique GUIDs are used across the data sources, which allow the data analysts to use Spark SQL.

The data sources in the batch layer share a common storage container. The Following data sources are used:

* Hive for sales data

* Apache HBase for operations data

* HBase for logistics data by suing a single region server.

End of Repeated scenario.

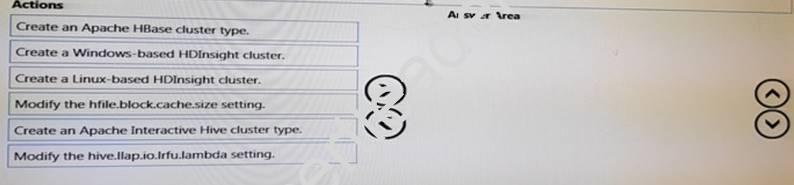

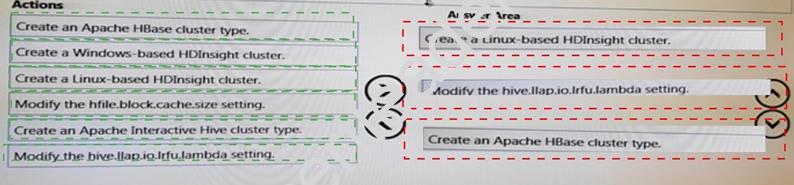

The business analysts require to monitor the sales data. The queries must be faster and more interactive than the batch layer queries.

You need to create a new infrastructure to support the queries. The solution must ensure that you can tune the cache policies of the queries.

Which three actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to answer area.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 6

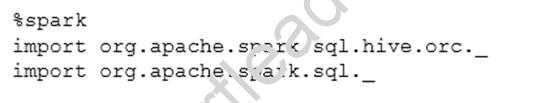

You have an Apache Spark cluster in Azure HDInsight.

You execute the following command,

What is the result of running the command?

- A. the Hive ORC library is imported to Spark and external tables in ORC format are created.

- B. the Spark library is imported and the data is loaded to an Apache Hive table.

- C. the Hive ORC library is imported to Spark arid the ORC-formatted data stored in Apache Hive tables becomes accessible

- D. the Spark library is imported and Scala functions are executed

Answer: C

NEW QUESTION 7

You have an Azure HDInsight cluster.

You need to store data in a file format that maximizes compression and increases read performance.

Which type of file format should you use?

- A. ORC

- B. Apache Parquet

- C. Apache Avro

- D. Apache Sequence

Answer: A

Explanation:

https://docs.microsoft.com/en-us/azure/data-factory/data-factory-supported-file-and-compression-formats

NEW QUESTION 8

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

Start of Repeated Scenario:

You have an initial data that contains the crime data from major cities.

You plan to build training models from the training data. You plan to automate the process of adding more data to the training models and to training the models by using the additional data, including data that is collected in near real time. The system will be used to analyze event data gathered from many different sources. Such as Internet of things (IoT) devices, Live video surveillance, and traffic activities, and to generate predictions of an increased crime risk at a particular time and ptace.

You have an incoming data stream from Twitter and an incoming data stream from

Facebook. which are event-based only, rather than time-based. You also have a time interval stream every 10 seconds.

The data is in a key/value pair format. The value field represents a number that defines how many times a hashtag occurs within a Facebook post or how many times a tweet that contains a specific hashtag is retweeted.

You must use the appropriate data storage, stream analytics techniques, and Azure HDInsight cluster types tor the various tasks associated to the processing pipeline.

End of repeated Scenario.

You plan to consolidate all of the stream into a single timeline, even though none of the streams report events at the same interval.

You need to aggregate the data from the feeds to align with the time interval stream. The result must be the sim of all values for each within a 10 second interval, with the keys being the hashtags.

Which function should you use?

- A. countByWindow

- B. reduccByWindow

- C. reduceByKeyAndWindow

- D. countByValueAndWindow

- E. updateStateByKey

Answer: E

NEW QUESTION 9

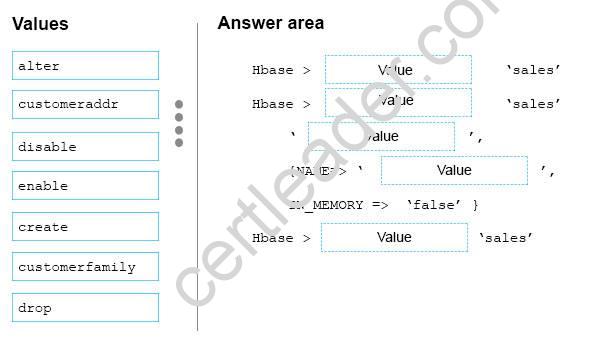

DRAG DROP

You have an Apache HBase cluster in Azure HDInsight. The cluster has a table named sales that contains a column family named customerfamily.

You need to add a new column family named customeraddr to the sales table.

How should you complete the command? To answer, drag the appropriate values to the correct targets. Each value may be used once, more than once or not at all.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

Hbase > disable 'sales'

Hbase > alter 'sales'

‘customerfamily’,

{NAME => 'customeraddr',

IN_MEMORY => false},

Hbase > enable 'sales'

NEW QUESTION 10

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You are building a security tracking solution in Apache Kafka to parse Security logs. The Security logs record an entry each time a user attempts to access an application. Each log entry contains the IP address used to make the attempt and the country from which the attempt originated.

You need to receive notifications when an IP address from outside of the United States is used to access the application.

Solution: Create new topics. Create a file import process to send messages. Start the consumer and run the producer.

Does this meet the goal?

- A. Yes

- B. No

Answer: A

NEW QUESTION 11

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You need to deploy an HDInsight cluster that will provide in memory processing, interactive queries, and micro batch stream processing. The cluster has the following requirements:

• Uses Azure Data Lake Store as the primary storage

• Can be used by HDInsight applications. What should you do?

- A. Use an Azure PowerShell Script to create and configure a premium HDInsight cluste

- B. Specify Apache Hadoop as the cluster type and use Linux as the operating System.

- C. Use the Azure portal to create a standard HDInsight cluste

- D. Specify Apache Spark as the cluster type and use Linux as the operating system.

- E. Use an Azure PowerShell script to create a standard HDInsight cluste

- F. Specify Apache HBase as the cluster type and use Windows as the operating system.

- G. Use an Azure PowerShell script to create a standard HDInsight cluste

- H. Specify ApacheStorm as the cluster type and use Windows as the operating system.

- I. Use an Azure PowerShell script to create a premium HDInsight cluste

- J. Specify Apache HBase as the cluster type and use Windows as the operating system.

- K. Use an Azure portal to create a standard HDInsight cluste

- L. Specify Apache Interactive Hive as the cluster type and use Windows as the operating system.

- M. Use an Azure portal to create a standard HDInsight cluste

- N. Specify Apache HBase as the cluster type and use Windows as the operating system

Answer: B

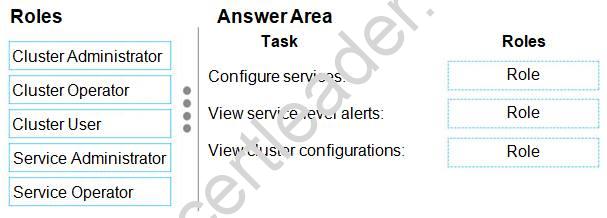

NEW QUESTION 12

DRAG DROP

You have a domain-joined Azure HDInsight cluster. You plan to assign permissions to several support staff.

You need to assign roles to the staff so that they can perform specific tasks.

The solution must use the principle of least privilege.

Which role should you assign for each task? To answer, drag the appropriate roles to the correct tasks. Each role may be used once, more than once, or not at all. You may need to drag the split bar between panes or scroll to view content.

NOTE: Each correct selection is worth one point.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 13

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others

might not have correct solution. Start of Repeated Scenario:

You have an initial data that contains the crime data from major cities.

You plan to build training models from the training data. You plan to automate the process of adding more data to the training models and to training the models by using the additional data, including data that is collected in near real time. The system will be used to analyze event data gathered from many different sources. Such as Internet of things (IoT) devices, Live video surveillance, and traffic activities, and to generate predictions of an increased crime risk at a particular time and ptace.

You have an incoming data stream from Twitter and an incoming data stream from Facebook. which are event-based only, rather than time-based. You also have a time interval stream every 10 seconds.

The data is in a key/value pair format. The value field represents a number that defines how many times a hashtag occurs within a Facebook post or how many times a tweet that contains a specific hashtag is retweeted.

You must use the appropriate data storage, stream analytics techniques, and Azure HDInsight cluster types tor the various tasks associated to the processing pipeline.

End of repeated Scenario.

You are designing the real-time portion of the input stream processing. The input will be a continuous stream of data and each record will be processed one at a time. The data will come from an Apache Kafka producer.

You need to identify which HDInsight cluster to use for the final processing of the input data. This will be used to generate continuous statistics and real-time analytics. The latency to process each record must be less than one millisecond and tasks must be performed in parallel.

Which type of cluster should you identify?

- A. Apache Storm

- B. Apache Hadoop

- C. Apache HBase

- D. Apache Spark

Answer: A

Explanation:

References: https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-storm-overview

NEW QUESTION 14

Note: This question is part of a series of questions that present the same Scenario. Each question I the series contains a unique solution that might meet the stated goals. Some question sets might have more than one correct solution while others might not have correct solution.

You need to deploy an HDInsight cluster that will have a custom Apache Ambari configuration.

The cluster will be joined to a domain and must perform the following:

* Fast data analytics and cluster computing by using in memory processing.

* Interactive queries and micro-batch stream processing What should you do?

- A. Use an Azure PowerShell Script to create and configure a premium HDInsight cluste

- B. Specify Apache Hadoop as the cluster type and use Linux as the operating System.

- C. Use the Azure portal to create a standard HDInsight cluste

- D. Specify Apache Spark as the cluster type and use Linux as the operating system.

- E. Use an Azure PowerShell script to create a standard HDInsight cluste

- F. Specify Apache HBase as the cluster type and use Windows as the operating system.

- G. Use an Azure PowerShell script to create a standard HDInsight cluste

- H. Specify Apache Storm as the cluster type and use Windows as the operating system.

- I. Use an Azure PowerShell script to create a premium HDInsight cluste

- J. Specify Apache HBase as the cluster type and use Windows as the operating system.

- K. Use an Azure portal to create a standard HDInsight cluste

- L. Specify Apache Interactive Hive as the cluster type and use Windows as the operating system.

- M. Use an Azure portal to create a standard HDInsight cluste

- N. Specify Apache HBase as the cluster type and use Windows as the operating system

Answer: D

NEW QUESTION 15

You have an Apache Spark cluster in Azure HDInsight. You plan to join a large table and a lookup table.

You need to minimize data transfers during the join operation. What should you do?

- A. Use the reduceByKey function

- B. Use a Broadcast variable.

- C. Repartition the data.

- D. Use the DISK_ONLY storage level.

Answer: B

NEW QUESTION 16

You have an Apache Hadoop cluster in Azure HDInsiqht that has a head node and three data nodes. You have a MapReduce job.

You receive a notification that a data node failed.

You need to identity which component caused the failure. Which tool should you use?

- A. Job Tracker

- B. TaskTracker

- C. ResourceManager

- D. ApplicationMaster

Answer: C

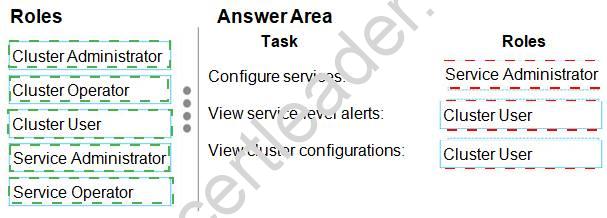

NEW QUESTION 17

DRAG DROP

You have an Apache Hive cluster in Azure HDInsight. You need to tune a Hive query to meet the following requirements:

• Use the Tez engine.

• Process 1,024 rows in a batch.

How should you complete this query? To answer, drag the appropriate values to the correct targets.

- A. Mastered

- B. Not Mastered

Answer: A

Explanation:

NEW QUESTION 18

Note: This question is part of a series of questions that use the same or similar answer choices. An answer choice may be correct for more than one question in the series. Each question is independent of the other questions in this series. Information and details provided in a question apply only to that question.

You need to deploy an enterprise data warehouse that will support in-memory analytics. The data warehouse must support connections that use the Microsoft Hive ODBC Driver and Beeline. The data warehouse will be managed by using Apache Ambari only.

What should you do?

- A. Use an Azure PowerShell script to create and configure a premium HDInsight cluster.Specify Apache Hadoop as the cluster type and use Linux as the operating system.

- B. Use the Azure portal to create a standard HDInsight cluste

- C. Specify Apache Spark as the cluster type and use Linux as the operating system.

- D. Use an Azure PowerShell script to create a standard HDInsight cluste

- E. Specify Apache HBase as the cluster type and use Windows as the operating system.

- F. Use an Azure PowerShell script to create a standard HDInsight cluste

- G. Specify Apache Storm as the cluster type and use Windows as the operating system.

- H. Use an Azure PowerShell script to create a premium HDInsight cluste

- I. Specify Apache HBase as the cluster type and use Linux as the operating system.

- J. Use an Azure portal to create a standard HDInsight cluste

- K. Specify Apache Interactive Hive as the cluster type and use Linux as the operating system.

- L. Use an Azure portal to create a standard HDInsight cluste

- M. Specify Apache HBase as the cluster type and use Linux as the operating system.

Answer: F

Explanation:

References: https://docs.microsoft.com/en-us/azure/hdinsight/hdinsight-hadoop-useinteractive-hive

NEW QUESTION 19

......

100% Valid and Newest Version 70-775 Questions & Answers shared by Certstest, Get Full Dumps HERE: https://www.certstest.com/dumps/70-775/ (New 61 Q&As)